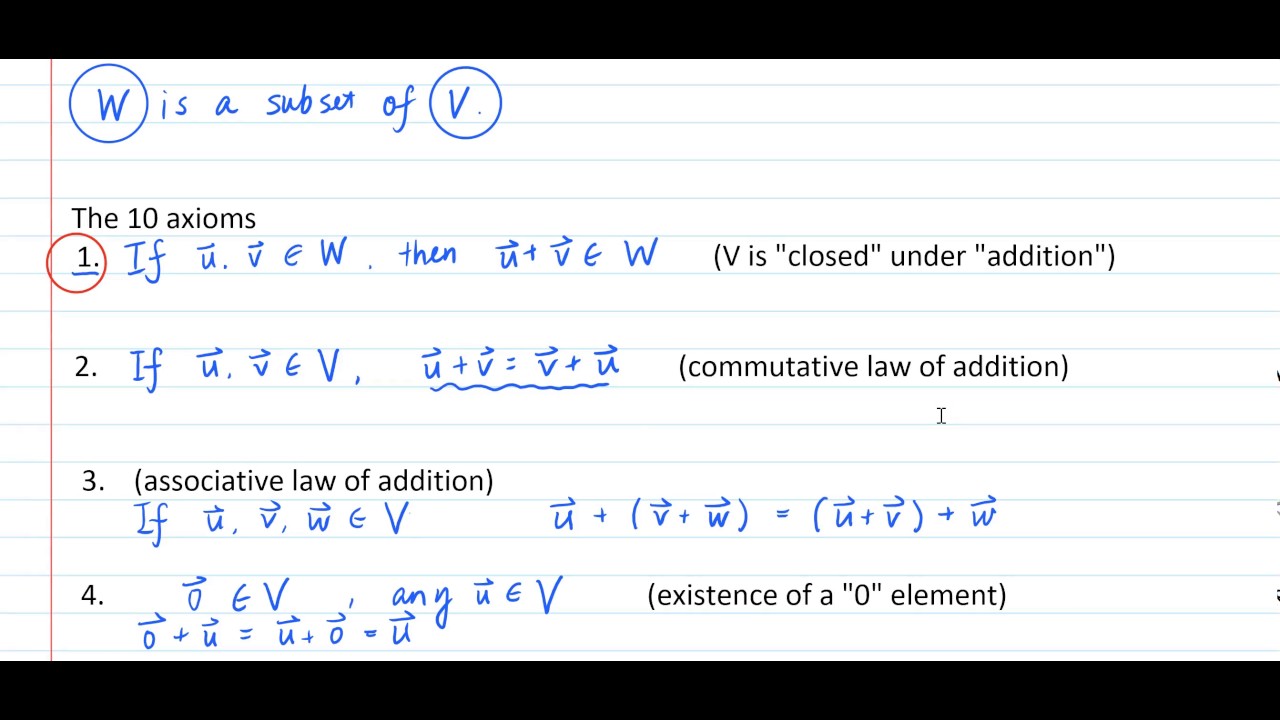

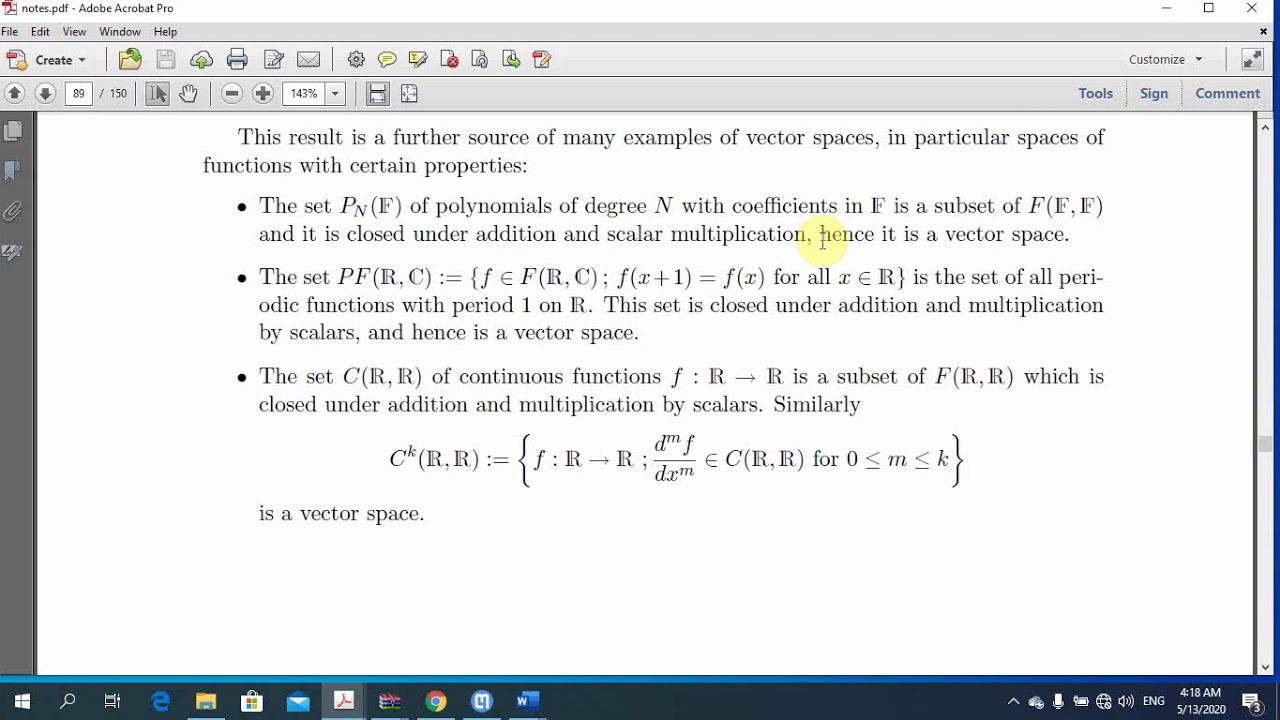

The 'rules' you know to be a subspace I'm guessing are 1) non-empty (or equivalently, containing the zero vector) 2) closure under addition 3) closure under scalar multiplication These were not chosen arbitrarily. A subspace is a vector space that is entirely contained within another vector space. Let’s work in the language of a matrix decomposition $ A = U \Sigma V^T$, more for practice with that language than anything else (using outer products would give us the same result with slightly different computations). The definition of a subspace is a subset that itself is a vector space. Now that we have our decomposition of theorem, understanding how the power method works is quite easy. The method we’ll use to solve the 1-dimensional problem isn’t necessarily industry strength (see this document for a hint of what industry strength looks like), but it is simple conceptually. We’ll first implement the greedy algorithm for the 1-d optimization problem, and then we’ll perform the inductive step to get a full algorithm. Now we’re going to write SVD from scratch. Linear spaces are defined in a formal and very general way by enumerating the properties that the two algebraic operations performed on the elements of the spaces (addition and multiplication by scalars) need to satisfy. That is, for all u1, u2 U and R, it holds that u1 + u2 U and u1 U. As an exercise to the reader, write a program that evaluates this claim (how good is “good”?). Definition (Linear Subspace): A linear subspace of Rn is a subset U Rn that is closed under vector addition and scalar multiplication. In mathematics, and more specifically in linear algebra, a linear subspace or vector subspace is a vector space that is a subset of some larger vector space. I.e., a rank-1 matrix would be a pretty good approximation to the whole thing. This tells us that the first singular vector covers a large part of the structure of the matrix.

This is what you’d expect from real data.Īlternatively, you could get to a stage $ v_k$ with $ k 15$ while the other two singular values are around $ 4$. The data does not lie in any smaller-dimensional subspace.

This means that the data in $ A$ contains a full-rank submatrix. Let T : V W be a linear transformation and let U be a subspace of V. We start with the best-approximating $ k$-dimensional linear subspace.ĭefinition: Let $ X = \^n$. The data set we test on is a thousand-story CNN news data set. All of the data, code, and examples used in this post is in a github repository, as usual. consistentlinear system: A system of linear equations is consistent if it has at least one See also: inconsistent. This post will be theorem, proof, algorithm, data. The column space of a matrix is the subspacespannedby the columns of the matrix considered as See also: row space. I’m just going to jump right into the definitions and rigor, so if you haven’t read the previous post motivating the singular value decomposition, go back and do that first.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed